Run the following commands to download and place it in the right directory: $ wget Don’t download any versions with “-M1”, “-M2”, etc. Download the latest stable version of Scala from here.

INSTALL APACHE SPARK MAC OS INSTALL

Spark is written in Scala, so we need to install Scala to built Spark. There will be a download link at the top. This is the link we need to use to download: $ wget Click on this link and it will take you to a webpage.

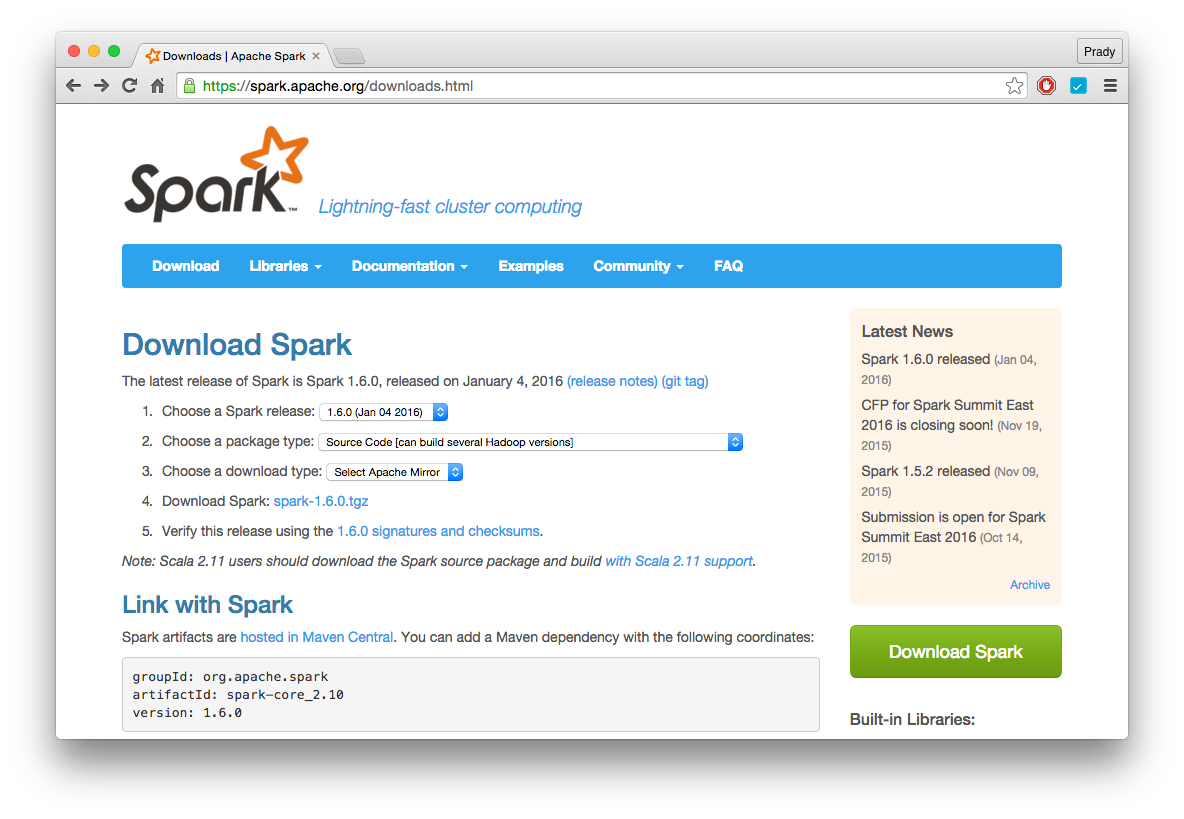

You will see “Download Spark” below it and a link next to it, but note that this is NOT the final download link. Choose a download type: Select Apache mirror.Choose a Spark release: pick the latest.Go to this site and choose the following options: We are ready to proceed with the installation. You need to install git (you’ll need it during the build process): $ sudo apt-get install git The following command will install the latest versions of OpenJRE and OpenJDK: $ sudo apt-get install -y default-jre default-jdk The first step is to update the packages: $ sudo apt-get update Let’s see how we can install it on Ubuntu. It is an open source big data processing framework that can process massive amounts of data at high speed using cluster computing.

This is where Apache Spark comes into picture. If you try to do it using your regular ways, you will never be able to do anything in time, let alone doing it in real-time. Not only that, we need to do it high efficiency. With so much data lying around, often ranging in petabytes and exabytes, we need super powerful systems to process it. This field of study is called Big Data Analysis. There’s so much data being generated in today’s world that we need platforms and frameworks that it’s mind boggling.